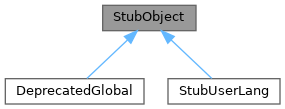

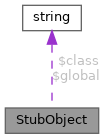

Class to implement stub globals, which are globals that delay loading the their associated module code by deferring initialisation until the first method call. More...

Public Member Functions | |

| __call ( $name, $args) | |

| Function called by PHP if no function with that name exists in this object. | |

| __construct ( $global=null, $class=null, $params=[]) | |

| _call ( $name, $args) | |

| Function called if any function exists with that name in this object. | |

| _newObject () | |

| Create a new object to replace this stub object. | |

| _unstub ( $name='_unstub', $level=2) | |

| This function creates a new object of the real class and replace it in the global variable. | |

Static Public Member Functions | |

| static | isRealObject ( $obj) |

| Returns a bool value whenever $obj is a stub object. | |

| static | unstub (&$obj) |

| Unstubs an object, if it is a stub object. | |

Protected Attributes | |

| null string | $class |

| null callable | $factory |

| null string | $global |

| array | $params |

Detailed Description

Class to implement stub globals, which are globals that delay loading the their associated module code by deferring initialisation until the first method call.

Note on reference parameters:

If the called method takes any parameters by reference, the __call magic here won't work correctly. The solution is to unstub the object before calling the method.

Note on unstub loops:

Unstub loops (infinite recursion) sometimes occur when a constructor calls another function, and the other function calls some method of the stub. The best way to avoid this is to make constructors as lightweight as possible, deferring any initialisation which depends on other modules. As a last resort, you can use StubObject::isRealObject() to break the loop, but as a general rule, the stub object mechanism should be transparent, and code which refers to it should be kept to a minimum.

Definition at line 45 of file StubObject.php.

Constructor & Destructor Documentation

◆ __construct()

| StubObject::__construct | ( | $global = null, | |

| $class = null, | |||

| $params = [] ) |

- Parameters

-

string $global Name of the global variable. string | callable $class Name of the class of the real object or a factory function to call array $params Parameters to pass to constructor of the real object.

Reimplemented in DeprecatedGlobal.

Definition at line 64 of file StubObject.php.

Member Function Documentation

◆ __call()

| StubObject::__call | ( | $name, | |

| $args ) |

Function called by PHP if no function with that name exists in this object.

- Parameters

-

string $name Name of the function called array $args Arguments

- Returns

- mixed

Definition at line 137 of file StubObject.php.

◆ _call()

| StubObject::_call | ( | $name, | |

| $args ) |

Function called if any function exists with that name in this object.

It is used to unstub the object. Only used internally, PHP will call self::__call() function and that function will call this function. This function will also call the function with the same name in the real object.

- Parameters

-

string $name Name of the function called array $args Arguments

- Returns

- mixed

Definition at line 110 of file StubObject.php.

References $args, $GLOBALS, and _unstub().

Referenced by __call().

◆ _newObject()

| StubObject::_newObject | ( | ) |

Create a new object to replace this stub object.

- Returns

- object

Reimplemented in DeprecatedGlobal, and StubUserLang.

Definition at line 119 of file StubObject.php.

References $class, $factory, and $params.

Referenced by _unstub().

◆ _unstub()

| StubObject::_unstub | ( | $name = '_unstub', | |

| $level = 2 ) |

This function creates a new object of the real class and replace it in the global variable.

This is public, for the convenience of external callers wishing to access properties, e.g. eval.php

- Parameters

-

string $name Name of the method called in this object. int $level Level to go in the stack trace to get the function who called this function.

- Returns

- object The unstubbed version of itself

- Exceptions

-

MWException

Definition at line 153 of file StubObject.php.

References $global, $GLOBALS, _newObject(), wfDebug(), and wfGetCaller().

Referenced by _call(), and StubUserLang\findVariantLink().

◆ isRealObject()

|

static |

Returns a bool value whenever $obj is a stub object.

Can be used to break a infinite loop when unstubbing an object.

- Parameters

-

object $obj Object to check.

- Returns

- bool True if $obj is not an instance of StubObject class.

Definition at line 81 of file StubObject.php.

Referenced by ParserOptions\__construct().

◆ unstub()

|

static |

Unstubs an object, if it is a stub object.

Can be used to break a infinite loop when unstubbing an object or to avoid reference parameter breakage.

- Parameters

-

object &$obj Object to check.

- Returns

- void

Definition at line 93 of file StubObject.php.

Referenced by ExtParserFunctions\timeCommon().

Member Data Documentation

◆ $class

|

protected |

Definition at line 50 of file StubObject.php.

Referenced by __construct(), and _newObject().

◆ $factory

|

protected |

Definition at line 53 of file StubObject.php.

Referenced by _newObject().

◆ $global

|

protected |

Definition at line 47 of file StubObject.php.

Referenced by __construct(), and _unstub().

◆ $params

|

protected |

Definition at line 56 of file StubObject.php.

Referenced by __construct(), and _newObject().

The documentation for this class was generated from the following file:

- includes/StubObject.php