Job to purge the HTML/file cache for all pages that link to or use another page or file. More...

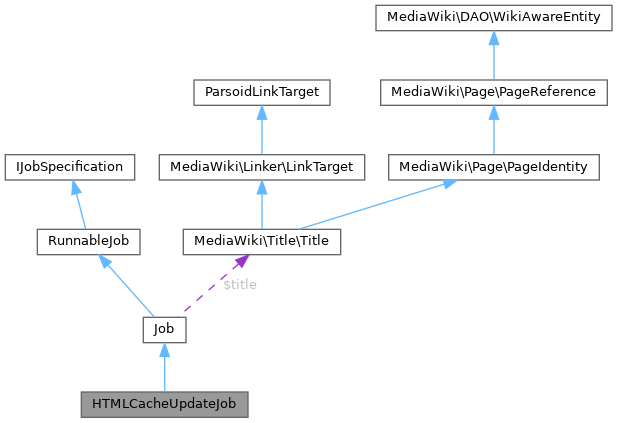

Inherits Job.

Public Member Functions | ||||

| __construct (Title $title, array $params) | ||||

| getDeduplicationInfo () | ||||

| Subclasses may need to override this to make duplication detection work. | ||||

| run () | ||||

| Run the job. | ||||

| workItemCount () | ||||

Public Member Functions inherited from Job Public Member Functions inherited from Job | ||||

| __construct ( $command, $params=null) | ||||

| allowRetries () | ||||

| ||||

| getLastError () | ||||

| ||||

| getMetadata ( $field=null) | ||||

| getParams () | ||||

| ||||

| getQueuedTimestamp () | ||||

| getReadyTimestamp () | ||||

| ||||

| getReleaseTimestamp () | ||||

| getRequestId () | ||||

| ||||

| getRootJobParams () | ||||

| getTitle () | ||||

| getType () | ||||

| ||||

| hasExecutionFlag ( $flag) | ||||

| ||||

| hasRootJobParams () | ||||

| ignoreDuplicates () | ||||

| Whether the queue should reject insertion of this job if a duplicate exists. | ||||

| isRootJob () | ||||

| setMetadata ( $field, $value) | ||||

| teardown ( $status) | ||||

| toString () | ||||

| ||||

Public Member Functions inherited from RunnableJob Public Member Functions inherited from RunnableJob | ||||

| tearDown ( $status) | ||||

| Do any final cleanup after run(), deferred updates, and all DB commits happen. | ||||

Static Public Member Functions | |

| static | newForBacklinks (PageReference $page, $table, $params=[]) |

Static Public Member Functions inherited from Job Static Public Member Functions inherited from Job | |

| static | factory ( $command, $params=[]) |

| Create the appropriate object to handle a specific job. | |

| static | newRootJobParams ( $key) |

| Get "root job" parameters for a task. | |

Protected Member Functions | |

| invalidateTitles (array $pages) | |

Protected Member Functions inherited from Job Protected Member Functions inherited from Job | |

| addTeardownCallback ( $callback) | |

| setLastError ( $error) | |

Additional Inherited Members | |

Public Attributes inherited from Job Public Attributes inherited from Job | |

| string | $command |

| array | $metadata = [] |

| Additional queue metadata. | |

| array | $params |

| Array of job parameters. | |

Protected Attributes inherited from Job Protected Attributes inherited from Job | |

| string | $error |

| Text for error that occurred last. | |

| int | $executionFlags = 0 |

| Bitfield of JOB_* class constants. | |

| bool | $removeDuplicates = false |

| Expensive jobs may set this to true. | |

| callable[] | $teardownCallbacks = [] |

| Title | $title |

Detailed Description

Job to purge the HTML/file cache for all pages that link to or use another page or file.

This job comes in a few variants:

- a) Recursive jobs to purge caches for backlink pages for a given title. These jobs have (recursive:true,table:

set. - b) Jobs to purge caches for a set of titles (the job title is ignored). These jobs have (pages:(<page ID>:(<namespace>,<title>),...) set.

Definition at line 38 of file HTMLCacheUpdateJob.php.

Constructor & Destructor Documentation

◆ __construct()

| HTMLCacheUpdateJob::__construct | ( | Title | $title, |

| array | $params ) |

Definition at line 42 of file HTMLCacheUpdateJob.php.

References $params.

Member Function Documentation

◆ getDeduplicationInfo()

| HTMLCacheUpdateJob::getDeduplicationInfo | ( | ) |

Subclasses may need to override this to make duplication detection work.

The resulting map conveys everything that makes the job unique. This is only checked if ignoreDuplicates() returns true, meaning that duplicate jobs are supposed to be ignored.

- Stability: stable

- to override

- Returns

- array Map of key/values

- Since

- 1.21

Reimplemented from Job.

Definition at line 180 of file HTMLCacheUpdateJob.php.

◆ invalidateTitles()

|

protected |

- Parameters

-

array $pages Map of (page ID => (namespace, DB key)) entries

Definition at line 119 of file HTMLCacheUpdateJob.php.

References wfTimestampOrNull().

Referenced by run().

◆ newForBacklinks()

|

static |

- Parameters

-

PageReference $page Page to purge backlink pages from string $table Backlink table name array $params Additional job parameters

- Returns

- HTMLCacheUpdateJob

Definition at line 63 of file HTMLCacheUpdateJob.php.

References $params, Job\$title, and Job\newRootJobParams().

◆ run()

| HTMLCacheUpdateJob::run | ( | ) |

Run the job.

- Returns

- bool Success

Implements RunnableJob.

Definition at line 76 of file HTMLCacheUpdateJob.php.

References Job\getRootJobParams(), invalidateTitles(), and BacklinkJobUtils\partitionBacklinkJob().

◆ workItemCount()

| HTMLCacheUpdateJob::workItemCount | ( | ) |

- Stability: stable

- to override

- Returns

- int

Reimplemented from Job.

Definition at line 194 of file HTMLCacheUpdateJob.php.

The documentation for this class was generated from the following file:

- includes/jobqueue/jobs/HTMLCacheUpdateJob.php